Navigating a STEM thesis in the United States is less like a straight sprint and more like an iterative debugging process. Much like software development, the research journey is riddled with “bugs”—methodological errors, data silos, and logic gaps—that can stall graduation or compromise the integrity of the work. In the competitive landscape of American R1 universities, where innovation is the currency, avoiding these common pitfalls is essential for any graduate student aiming for a high-impact publication.

The High Stakes of US STEM Research

The US National Science Foundation (NSF) continues to emphasize rigorous reproducibility and data management as pillars of federal funding. For a student, this means the bar for “valid research” has never been higher. However, the transition from structured coursework to the ambiguity of original research often leads to systematic errors. Whether you are modeling climate patterns at MIT or conducting proteomics research at Stanford, the “bugs” in your thesis usually stem from foundational oversights rather than a lack of effort.

When the technical complexity becomes overwhelming, many students seek external support to audit their methodology. Utilizing professional myassignmenthelp services alongside specialized research paper help ensures that you receive expert guidance across various scientific disciplines, helping to refine your data architecture and argumentative logic.

1. The “Scope Creep” Bug: Over-Engineering the Hypothesis

One of the most frequent pitfalls in US graduate programs is the “Nobel Prize Syndrome.” Students often attempt to solve a decade-long industry problem in a single two-year thesis.

- The Pitfall: Selecting a hypothesis that is too broad, leading to a lack of depth and “thin” data.

- The Debug: Use the S.M.A.R.T. criteria (Specific, Measurable, Achievable, Relevant, Time-bound). In US STEM culture, a perfectly executed narrow study is prioritized over a messy, broad one. Seeking research paper help early can help narrow this focus before you invest months into an unmanageable topic.

2. Data Management and Reproducibility Gaps

The “Reproducibility Crisis” is a major talking point in American science. According to a study published in Nature, over 70% of researchers have failed to reproduce another scientist’s experiments.

- The Pitfall: Poor documentation of “Lab Notebooks” or failure to version-control code (e.g., not using GitHub or Bitbucket).

- The Debug: Implement a Data Management Plan (DMP) from day one. Ensure your metadata follows the FAIR principles: Findable, Accessible, Interoperable, and Reusable.

3. Cross-Disciplinary Logic Failures

Often, STEM students get so “tunnel-visioned” into their specific niche—be it Organic Chemistry or Quantum Computing—that they forget the universal rules of academic inquiry. Diversifying your reading can prevent cognitive bias. For instance, exploring child development research topics might seem unrelated, but it provides excellent examples of how to manage longitudinal variables and control groups, which are critical skills in any biological or social science STEM track.

4. Statistical Underpowering

In fields like Bio-Engineering or Data Science, the misuse of P-values is a common reason for thesis rejection during the defense.

- The Pitfall: Relying on a sample size $n$ that is too small to detect a true effect, leading to Type II errors.

- The Debug: Conduct a Power Analysis before data collection begins. US faculty committees look for a clear justification of sample size based on expected effect sizes and desired statistical power (typically 0.80 or higher).

Key Takeaways for STEM Researchers

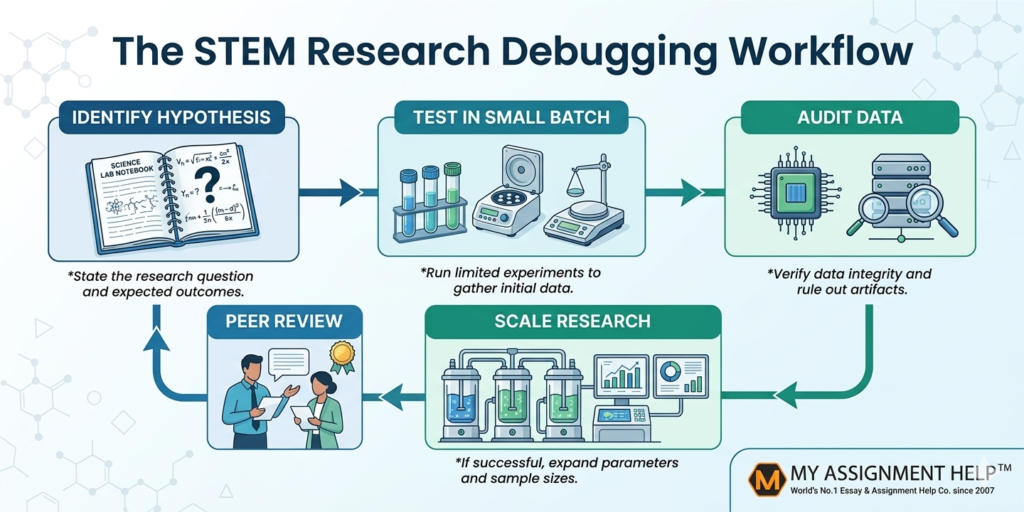

- Iterate Early: Treat your first draft as a “beta version.” Seek feedback before the final defense to catch logic errors.

- Automate Documentation: Use LaTeX for formatting and Zotero or Mendeley for citation management to avoid manual bibliography errors.

- Validate Methodology: Always cross-reference your methods with recent peer-reviewed literature from databases like IEEE Xplore or PubMed.

- Prioritize Ethics: Ensure all IRB or IACUC approvals are secured and documented before a single data point is collected.

Technical Comparison: Traditional vs. Robust Research Workflows

| Feature | Traditional Workflow (Error-Prone) | Robust STEM Workflow (Debugged) |

| Literature Review | Linear reading; manual notes | Systematic Review; Reference mapping |

| Data Storage | Local hard drives; spreadsheets | Cloud-based; SQL/NoSQL databases |

| Analysis | Manual calculation/Excel | R/Python scripts with version control |

| Peer Review | Post-completion feedback | Continuous “lab-meeting” iterations |

Frequently Asked Questions (FAQ)

Q1: How do I handle a “Null Result” in my STEM thesis?

In the US academic system, a null result is not a failure. As long as your methodology was rigorous and your study was sufficiently powered, a null result is a valid scientific contribution that prevents future researchers from wasting resources on a dead-end path.

Q2: What is the most important section of a US STEM thesis?

While the “Results” are exciting, the “Materials and Methods” section is the most critical for your committee. It proves that your findings are not a fluke and can be replicated, which is the foundation of E-E-A-T (Expertise, Experience, Authoritativeness, and Trustworthiness).

Q3: Is it common to change my hypothesis halfway through?

Yes. This is often referred to as “pivoting.” In STEM research, if the initial data suggests your premise is flawed, pivoting demonstrates a high level of scientific maturity, provided you document the transition logically.

Author Bio

Dr. Aris Thorne is a Senior Research Consultant at myassignmenthelp, specializing in STEM academic strategy and technical communication. With over 12 years of experience in academic mentoring, Dr. Thorne has helped thousands of students in the US and UK navigate the complexities of thesis defense. His work focuses on bridging the gap between raw data and impactful academic storytelling, ensuring all content meets the highest standards of academic integrity.

References:

- National Science Foundation (NSF), 2025. “Data Management Guidelines.”

- Baker, M., 2016. “1,500 scientists lift the lid on reproducibility.” Nature.

- American Statistical Association, 2024. “Statement on P-values and Statistical Significance.